Infrastructure

Support for the three latest major releases:

19.4.2.7 19.3.7 19.1.14

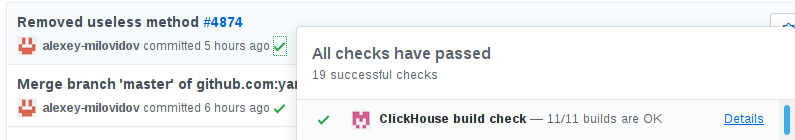

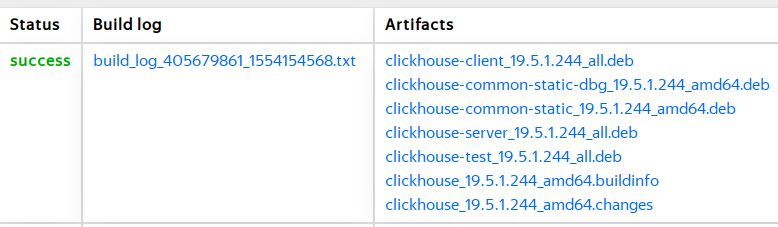

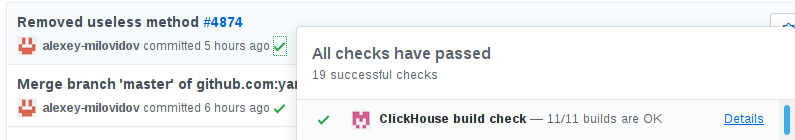

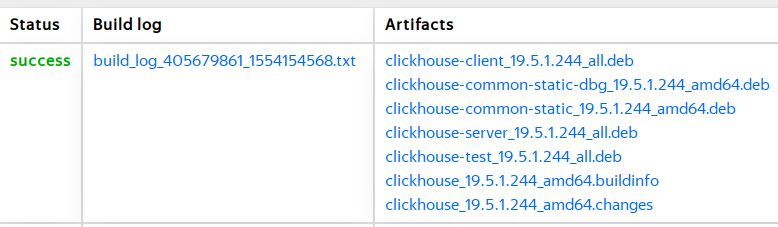

Publicly available per commit builds:

aka "Data skipping indices"

Collect a summary of column/expression values for every N granules.

Use this summaries to skip data while reading.

Indices are available for MergeTree family of table engines.

SET allow_experimental_data_skipping_indices = 1;

CREATE TABLE table

(...

INDEX name expr TYPE type(params...) GRANULARITY n

...)

ALTER TABLE ... ADD INDEX name expr

TYPE type(params...) GRANULARITY n

ALTER TABLE ... DROP INDEX name

minmax

— summary is just min/max boundaries of values;

— use when values are correlated to table order;

or distributed locally; or sparse;

set(k)

— summary is a set of all distinct values, but not larger than k;

— use when values are sparse or have low cardinality;

— reasonable values of k is about hundred;

Used for comparison and IN operators.

Full text search indices (highly experimental)

ngrambf_v1(chars, size, hashes, seed)

tokenbf_v1(size, hashes, seed)

Used for equals comparison, IN and LIKE.

SELECT count()

FROM test.hits

WHERE URLDomain LIKE 'aena.es'

┌─count()─┐

│ 1 │

└─────────┘

Processed 8.87 million rows

SET allow_experimental_data_skipping_indices = 1;

ALTER TABLE test.hits

ADD INDEX

domain_index URLDomain

TYPE set(1000) GRANULARITY 1;

OPTIMIZE TABLE test.hits FINAL;

SELECT count()

FROM test.hits

WHERE URLDomain LIKE 'aena.es'

┌─count()─┐

│ 1 │

└─────────┘

Processed 65.54 thousand rows

col type CODEC(codecs...)

Available codecs:

— LZ4 (default);

— ZSTD; — level can be specified: ZSTD(1);

— LZ4HC; — level can be specified;

— NONE;

— Delta(N); — N is the size of the data type in bytes.

Codecs can be chained together:

time DateTime CODEC(Delta(4), LZ4)

— Delta is not a compression itself, it must be chained with a second codec.

Per-column codecs have priority over <compression> config settings.

SELECT

name,

type,

formatReadableSize(data_compressed_bytes) AS compressed,

formatReadableSize(data_uncompressed_bytes) AS uncompressed,

data_uncompressed_bytes / data_compressed_bytes AS ratio,

compression_codec

FROM system.columns

WHERE (database = 'test') AND (table = 'hits')

ORDER BY data_compressed_bytes DESC

LIMIT 10

┌─name────────────┬─type─────┬─compressed─┬─uncompressed─┬──────────────ratio─┬─compression_codec─┐

│ Referer │ String │ 180.19 MiB │ 582.99 MiB │ 3.2353881463220975 │ │

│ URL │ String │ 128.93 MiB │ 660.58 MiB │ 5.123600238954646 │ │

│ Title │ String │ 95.29 MiB │ 595.01 MiB │ 6.244488505685867 │ │

│ WatchID │ UInt64 │ 67.28 MiB │ 67.70 MiB │ 1.0062751884416956 │ │

│ URLHash │ UInt64 │ 37.09 MiB │ 67.70 MiB │ 1.8254645825020759 │ │

│ ClientEventTime │ DateTime │ 31.42 MiB │ 33.85 MiB │ 1.0772947535816229 │ │

│ EventTime │ DateTime │ 31.40 MiB │ 33.85 MiB │ 1.0780959105750834 │ │

│ UTCEventTime │ DateTime │ 31.39 MiB │ 33.85 MiB │ 1.0783175064258996 │ │

│ HID │ UInt32 │ 28.28 MiB │ 33.85 MiB │ 1.19709852035762 │ │

│ RefererHash │ UInt64 │ 27.68 MiB │ 67.70 MiB │ 2.445798559204409 │ │

└─────────────────┴──────────┴────────────┴──────────────┴────────────────────┴───────────────────┘

ALTER TABLE test.hits

MODIFY COLUMN ClientEventTime CODEC(Delta(4), LZ4)

Changes are applied lazily: only for new data and while merging.

ALTER TABLE test.hits

UPDATE ClientEventTime = ClientEventTime WHERE 1

— a trick to rewrite column data on disk.

— also executed in background, look at system.mutations table.

SELECT

name,

type,

formatReadableSize(data_compressed_bytes) AS compressed,

formatReadableSize(data_uncompressed_bytes) AS uncompressed,

data_uncompressed_bytes / data_compressed_bytes AS ratio,

compression_codec

FROM system.columns

WHERE (database = 'test') AND (table = 'hits') AND (name = 'ClientEventTime')

ORDER BY data_compressed_bytes DESC

LIMIT 10

┌─name────────────┬─type─────┬─compressed─┬─uncompressed─┬──────────────ratio─┬─compression_codec────┐

│ ClientEventTime │ DateTime │ 19.47 MiB │ 33.85 MiB │ 1.7389218149308554 │ CODEC(Delta(4), LZ4) │

└─────────────────┴──────────┴────────────┴──────────────┴────────────────────┴──────────────────────┘

ALTER TABLE test.hits

MODIFY COLUMN

ClientEventTime CODEC(Delta(4), ZSTD),

UPDATE ClientEventTime = ClientEventTime WHERE 1

┌─name────────────┬─type─────┬─compressed─┬─uncompressed─┬─────────────ratio─┬─compression_codec────────┐

│ ClientEventTime │ DateTime │ 14.00 MiB │ 33.85 MiB │ 2.417489322394391 │ CODEC(Delta(4), ZSTD(1)) │

└─────────────────┴──────────┴────────────┴──────────────┴───────────────────┴──────────────────────────┘

Just replace String to LowCardinality(String)

for string fields with low number of unique values.

... and it will magically work faster.

For high cardinality fields it will work fine but pointless.

Examples:

— city name — ok;

— domain of URL — ok;

— search phrase — bad;

— URL — bad;

SELECT count()

FROM hits_333

WHERE URLDomain LIKE 'aena.es'

┌─count()─┐

│ 101 │

└─────────┘

1 rows in set. Elapsed: 0.446 sec.

Processed 333.36 million rows, 7.32 GB

(747.87 million rows/s., 16.43 GB/s.)

ALTER TABLE hits_333

MODIFY COLUMN

URLDomain LowCardinality(String)

Ok.

0 rows in set. Elapsed: 16.228 sec.

SELECT count()

FROM hits_333

WHERE URLDomain LIKE 'aena.es'

┌─count()─┐

│ 101 │

└─────────┘

1 rows in set. Elapsed: 0.244 sec.

Processed 333.36 million rows, 1.72 GB

(1.37 billion rows/s., 7.04 GB/s.)

Two times faster!

Multiple substring search

Multiple regexp search

Fuzzy string comparison and search

Fuzzy regexp match

SELECT count()

FROM hits_100m

WHERE multiSearchAny(URL,

['chelyabinsk.74.ru', 'doctor.74.ru', 'transport.74.ru',

'm.74.ru', 'chel.74.ru', 'afisha.74.ru', 'diplom.74.ru',

'//chel.ru', 'chelyabinsk.ru', 'cheldoctor.ru'])

— multiSearchAny

— multiSearchFirstPosition

— multiSearchFirstIndex

— multiSearchAllPositions

+ -UTF8, -CaseInsensitive, -CaseInsensitiveUTF8

— multiMatchAny

— multiMatchAnyIndex

— multiFuzzyMatchAny

— multiFuzzyMatchAnyIndex

— ngramDistance

+ -UTF8, -CaseInsensitive, -CaseInsensitiveUTF8

SELECT DISTINCT

SearchPhrase,

ngramDistance(SearchPhrase, 'clickhouse') AS dist

FROM hits_100m_single

ORDER BY dist ASC

LIMIT 10

┌─SearchPhrase────┬───────dist─┐

│ tickhouse │ 0.23076923 │

│ clockhouse │ 0.42857143 │

│ house │ 0.5555556 │

│ clickhomecyprus │ 0.57894737 │

│ 1click │ 0.6 │

│ uhouse │ 0.6 │

│ teakhouse.ru │ 0.625 │

│ teakhouse.com │ 0.64705884 │

│ madhouse │ 0.6666667 │

│ funhouse │ 0.6666667 │

└─────────────────┴────────────┘

10 rows in set. Elapsed: 1.267 sec. Processed 100.00 million rows, 1.52 GB

(78.92 million rows/s., 1.20 GB/s.)

(contributed by TouTiao/ByteDance)

SELECT * FROM hdfs(

'hdfs://hdfs1:9000/file',

'TSV', 'id UInt64, text String);

INSERT INTO TABLE FUNCTION hdfs(

'hdfs://hdfs1:9000/file',

'TSV', 'id UInt64, text String) VALUES ...

CREATE TABLE (...) ENGINE =

HDFS('hdfs://hdfs1:9000/file', 'TSV');

Drawbacks: not all authentication methods supported.

Protobuf

— efficient implementation, no excessive copies/allocations

(ClickHouse style);

— transparent type conversions between Proto's and ClickHouse types (UInt8, Int64, DateTime <-> sint64, uint64, sint32, uint32, String <-> bytes, string, etc.);

— support for Nested types via repeated Messages or parallel repeated fields;

— format_schema setting must be specified.

Parquet

— columnar format; naturally implemented without unpacking of columns;

— transparent type conversions also supported.

IPv4 and IPv6 data types

(contributed by Altinity)

SELECT DISTINCT ClientIP6

FROM test.hits

LIMIT 10

┌─ClientIP6─┐

│

│ ��m��� │

│ �� � │

│ ���9EY │

│ ���9�� │

│ ��]�� │

│ ��T/�� │

│ ��m�(� │

│ ��_N( │

└───────────┘

SELECT DISTINCT IPv6NumToString(ClientIP6)

FROM test.hits

LIMIT 10

┌─IPv6NumToString(ClientIP6)─┐

│ ::ffff:128.70.160.13 │

│ ::ffff:109.167.145.182 │

│ ::ffff:46.8.32.249 │

│ ::ffff:178.57.69.89 │

│ ::ffff:178.57.159.237 │

│ ::ffff:2.93.132.186 │

│ ::ffff:84.47.183.210 │

│ ::ffff:109.184.40.252 │

│ ::ffff:95.78.40.21 │

└────────────────────────────┘

ALTER TABLE test.hits

MODIFY COLUMN

ClientIPv6 IPv6

SELECT DISTINCT ClientIP6

FROM test.hits

LIMIT 10

┌─ClientIP6──────────────┐

│ ::ffff:93.77.44.100 │

│ ::ffff:176.59.183.15 │

│ ::ffff:46.0.180.32 │

│ ::ffff:46.0.180.50 │

│ ::ffff:178.69.88.247 │

│ ::ffff:92.248.134.86 │

│ ::ffff:91.124.115.91 │

│ ::ffff:178.126.222.105 │

│ ::ffff:37.229.239.173 │

└────────────────────────┘

without subqueries

(I will not show you an example.

It should work as in any mature SQL DBMS.)

Drawbacks:

— only equi-JOIN;

— no condition pushdown to the right hand side;

(work in progress)

To extend ORDER BY clause in your favourite

Replacing/Aggregating/Collapsing MergeTrees

A column must be added at the same time:

ALTER TABLE t

ADD COLUMN z UInt64

MODIFY ORDER BY (x, y, z)

for distributed queries

(contributed by Spotify)

SET optimize_skip_unused_shards = 1

<merge_tree>

<use_minimalistic_part_header_in_zookeeper>

1

</use_minimalistic_part_header_in_zookeeper>

</merge_tree>

Not enabled by default.

Support for the three latest major releases:

19.4.2.7 19.3.7 19.1.14

Publicly available per commit builds:

Public Test Datasets

Automated per-commit performance tests

Added UBSan and TSan (along with ASan).

Adaptive index granularity

Row-level security

Column and table TTL expressions

Indexing by z-Order curve

DDL queries for dictionaries

ASOF JOIN (by Citadel Securities)

S3 import/export

Multiple storage volumes

Role Based Access Control

Aggregate functions for machine learning

Merge JOIN

Workload management

Optimization of ORDER BY and GROUP BY with table's order key.

Web site: https://clickhouse.com/

Google groups: https://groups.google.com/forum/#!forum/clickhouse

Maillist: [email protected]

Telegram chat: https://telegram.me/clickhouse_en

GitHub: https://github.com/ClickHouse/ClickHouse/

Twitter: https://twitter.com/ClickHouseDB